In a recent analysis by Conductor, out of 21.9 million searches, AI Overviews appeared in more than 5.5 million of them. That’s one in four queries where the click economy never even has a chance to begin.

With AI search traffic projected to exceed traditional search by 2028, expect that share to grow.

The new world of AI discovery, where users get answers, not links. Where visibility isn’t earned through blue links—it’s negotiated in real time inside AI summaries—synthesized answers from multiple sources by large language models (LLMs).

This isn’t the death of SEO. It’s the widening of its battlefield.

We’re stepping into an environment where AI isn’t just retrieving information—it’s curating it, deciding which brands are credible enough to cite, mention, and surface as authoritative sources.

The shift mimics what happened when digital media wiped out print dominance: attention didn’t disappear, it moved. And marketers who followed it early won big.

SEO success in 2026 won’t be measured only by traffic graphs or position-tracking dashboards. It will be measured by how effectively your AI content citation strategy:

– Ensures your research becomes part of an AI answer.

– Gets your perspective quoted in an overview.

– Earns your brand a seat inside LLM-generated recommendations.

Traditional SEO rewarded displacement. Be better than the article above you and push it off page one. The new model rewards differentiating value.

LLMs stitch their responses together from multiple sources; your job is to say something no one else is saying, backed by experience, data, and perspective—not re-written summaries of what already exists.

As the old SEO phrase “How do I rank?, departs, and How do I make content visible to AI models and show up wherever the answers are being generated” steps in.

And that’s where our guide on AI citable content begins.

How AI tools discover and cite content

To understand how to make AI citable content, it helps to first understand how AI models interpret information in the first place—because it’s radically different from traditional search most SEO teams are aware of. Let’s understand that first.

Search engines crawl pages. AI models interpret them.

Traditional search crawlers evaluate markup, metadata, link structures, and schema to understand what a page is about. Ranking is driven by signals like backlinks, relevance scoring, and technical structure.

LLMs don’t process content as crawlers do. They:

- Break text into tokens.

- Analyze semantic relationships between words, sentences, and concepts.

- Use attention mechanisms to determine meaning and relevance.

LLM’s objective is simple but demanding: deliver helpful, accurate information while minimizing hallucination. That priority shapes what they choose to cite.

What AI systems evaluate when selecting content to cite

AI search uses deeper linguistic and factual evaluation. Content that gets cited by AI systems typically manifests:

- Clarity and conciseness

- Explicit attribution for claims and data points

- Topical authority

- AI-friendly content structure, logical and parse-friendly formatting

When deciding what to include in an AI-generated answer, models evaluate:

- The order and flow of ideas.

- Hierarchy signaled through headings (H2s, H3s).

- Formatting that makes key information scannable (bullets, tables, summaries).

- Reinforcement and repetition that indicate importance.

This explains why:

A clearly structured article with clean formatting can be cited by AI systems even if it has minimal schema markup — while a heavily optimized but chaotic post is ignored.

Moreover, when an AI generates a response, it is not pulling a single source into a single result, as in a SERP snippet.

It is building a composite answer across multiple documents, extracting sentences, definitions, or step-by-step instructions. The content that gets pulled most often are sections that:

- Express one idea per segment.

- Use consistent terminology.

- Follow a predictable instructional format (FAQs, definitions, how-to steps, checklists).

- Avoid burying information under sales fluff or long paragraphs.

Said another way: the easier your content is to understand and segment, the easier it is for AI systems to quote or paraphrase.

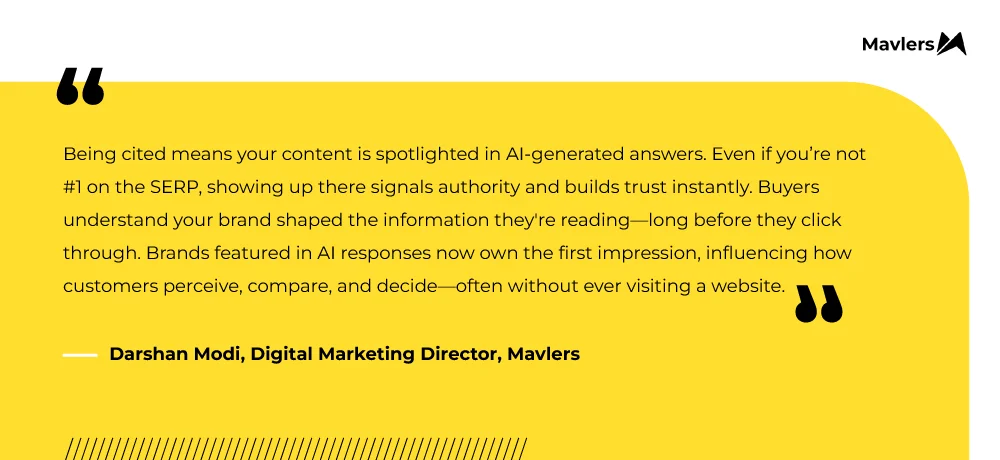

That said, authority still matters

Even though structure aids extraction, visibility determines LLM-referenceability.

From the Experts at Mavlers –

AI systems—including AI Overviews and Gemini—still prioritize trusted sources as their starting point. Pages with strong E-E-A-T, backlinks, and broad brand presence get chosen more often.

And it’s not always the top result that wins the citation.

If you observe carefully, lower-ranking but more authoritative pages often outperform weaker pages sitting in Position 1. Brands that dominate high-quality external mentions (reviews, forums, curated lists) are cited more frequently because they already shape the conversation.

The key takeaway is—

To write deliberately and structure every content piece strategically. The clearer and more comprehensive it is, the more likely it will be understood—and cited—by LLMs, increasing its influence on AI-generated outputs.

Check out our following blogs for in-depth exploration of AI overviews and AEO strategies-

- SEO vs. AEO: Which Strategy Should You Focus On in 2025?

- Rising beyond the blue links ~ Mastering Google AI overviews for SEO success in 2025

Crafting an AI content citation strategy: how to make content AI-citable

TL;DR: AI-ready content guidelines

- AI citable content is clear, structured, and easy to extract.

- Build pages around real questions and intent.

- Back claims with evidence, data, and context, not vague opinions.

- Publish original insight (research, usage data, expert voices, lessons learned) that AI can’t source anywhere else.

- Use clean formatting: headings, lists, tables, summaries—so models can parse confidently.

- Support it with technical signals (schemas, freshness, discoverability) to help AI trust and surface your content.

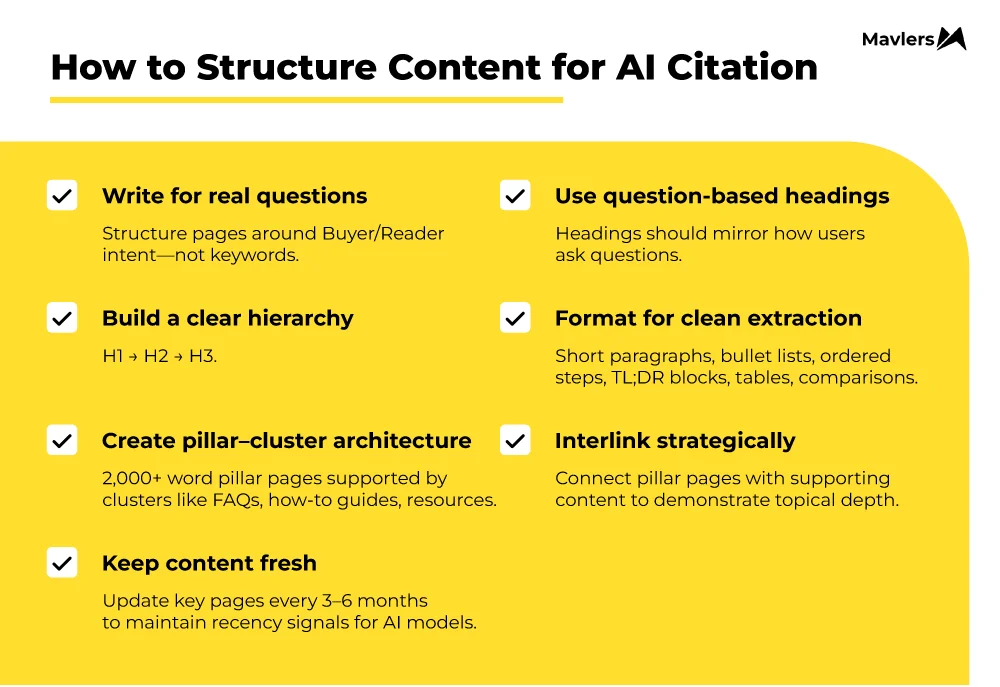

Strategy 1: Create an AI-friendly content structure

If you want to make content AI-citable, you need to stop writing pages that ramble and start building pages that teach.

This essentially means creating a well-structured page that serves as a learning node: something a model can understand, extract from, and assemble into responses.

That structure carries more LLM-referenceability than keyword density or clever headlines ever will.

With such content, it’s easier for LLMs to absorb and break text into semantic units. Which, in turn, helps them determine which pieces are clear, complete, and worth reusing in an answer.

For AI-friendly content structure:

- Arrange your content around real questions and logical hierarchy. That way, LLM models can match your sections to the queries users actually ask.

- Write headings as natural questions—like “Why isn’t my content being cited by AI search?” They sound less robotic and map directly to intent, which increases the odds of being pulled into summaries or AI Overviews.

Formatting decisions matter too. LLMs look for segmentation cues. These cues work more like extraction points that signal AI where a clean citation begins and ends. Some of these cues are:

- Short paragraphs with one idea each.

- Bullet lists and ordered steps.

- Clear topic sentences.

- Tables and comparison blocks.

- TL;DR summaries.

The same thinking rings true for how you structure your broader content library–

- Build comprehensive pillar pages (2,000+ words with structured sections).

- Cluster articles underneath using a clear hierarchy and internal links. FAQs, how-to articles, and resource guides are good candidates to branch out from the pillar.

- Link these pages together so AI models can sense your topical authority and comprehensiveness in your niche.

- Keep your pillar content updated every 3–6 months to keep it fresh, as recency is a major factor in whether AI systems choose to reference it.

We’ve broken this down in detail in our blog on building internal linking maps for topical authority— worth a read.

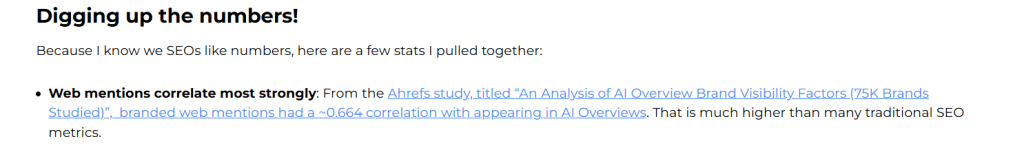

Strategy 2: Provide verifiable, attribution-friendly information

LLMs cite content they can trust. If you can’t back a claim by evidence, it’s impossible to trust.

For example, AI models have a special kind of fondness for content rooted in primary sources, and that’s what earns authority, and that’s what gets cited.

- Industry research

- Product documentation

- Proprietary data

- Expert analysis.

This isn’t a new rule invented for AI. It’s what good SEO content should have always been. The difference now is that AI has made transparency non-negotiable. Think about it- if your article makes big claims without saying where the data came from, why would an AI risk quoting you?

To improve AI discoverability and increase your odds of being referenced, make verification traceable as part of your AI content citation strategy:

- Attribute data clearly, with links or source callouts.

- Explain the methodology and any limitations.

- Add context instead of pasting raw statistics.

- Refresh dated numbers—don’t wait for annual updates.

Sharing stats isn’t enough. What earns citations is the insight around them. It shows that you understand what the data implies, not just where it came from.

Here’s a snippet of that from our own blog:

That’s the kind of structure AI can easily extract — name the source, explain the meaning, and the context. And, for this reason, the trick is to lead with evidence, then adorn it with your own insights.

In turn, it’s enough of a credibility signal for both humans and machines to make your content easier for LLMs to extract as a clean, citation-ready nugget. Plus, it separates original thought from recycled summaries.

Strategy 3: Build expert insights that AI can’t find anywhere else

The fastest path to making content visible to AI models is to stop recycling and start publishing proprietary content that competitors can’t replicate.

All things considered, LLMs don’t need another reworded summary of page-one results. What they need is unique content that fills gaps and expands context. Original research becomes a moat here.

Even so, it doesn’t have to come from large-scale studies or expensive datasets. At Mavlers, we leverage the insights we already own daily and use them for content optimization for LLMs:

- Use data: Surveys and product usage stats to highlight patterns AI can’t infer elsewhere.

- First-hand experience: Case studies, experiments gone right (or wrong), and the behind-the-scenes decisions.

- Expert interviews and quotes: Takeaways from in-house SMEs and trusted industry peers.

Finally, when you publish new data or unique viewpoints, you become the primary source. After all, which AI system is happy to surface, reference, and attribute because it expands the available knowledge base rather than recycling it?

Beyond unique insights, filling in the content gap also goes a long way in optimizing content for AI outputs. Here’s how:

- Extend an idea that the competitors only mention briefly.

- Provide execution details, even though most articles stay at the theoretical level.

- Challenge outdated assumptions and show what has changed.

- Add nuance or specialization rather than broad commentary.

This makes your content move the discussion forward and show up into synthesized responses and citations for context.

Strategy 4: Optimize technical foundations for AI citation

That said, even the best content won’t get cited if AI can’t find or understand it. It’s the technical optimization part of your AI content citation strategy that ensures your ideas, data, and insights are visible and clear.

1. Structured data

Use schema markup for articles, FAQs, products, reviews, and authors. Assign unique IDs (@id) to entities like executives, products, or brands. This builds a semantic graph that AI can read to understand relationships and accurately represent your content.

2. Crawlable content

AI parses HTML, not JavaScript. Server-side rendering ensures key text, schema, and metadata are immediately accessible. Quick test: turn off JavaScript—if important content disappears, AI won’t see it.

3. Emerging AI protocols

- IndexNow: Notifies engines and AI systems when you add, update, or delete content.

- LLMs.txt: Signals where authoritative content lives.

Adopting these early can distinguish your brand as AI-citable.

4. Technical SEO hygiene

- Performance & speed: Fast pages increase crawl reliability.

- Mobile optimization: Responsive design ensures broad accessibility.

- Site architecture & linking: Logical hierarchies keep content discoverable.

- Schema & code quality: Clean markup reduces ambiguity.

- HTTPS security: AI results trust and favour secure sites. re trusted and favo

The road ahead

There is no debate that organic search still fuels most discovery. But now, AI has created a parallel layer of visibility. Visibility that no longer starts on your website. It starts inside AI experiences that answer questions, shape intent, and influence perception in real time.

Expect volatility. Anticipate more SERP experiments. Plan for AI citations to accelerate.

But the mission hasn’t changed: help users find what they need and earn their trust. Only a forward-looking AI content citation strategy is a definitive roadmap to that.

So what’s your next step? Check out this article: